Publishers that share datasets through the DiGIR and TAPIR protocols are especially vulnerable to text fields that contain polluted data. Information about locality (http://rs.tdwg.org/dwc/terms/index.htm#locality) is often quite rich and can be copied from diverse sources, thereby entering the database table possibly without having been through a verification or a cleaning process. The locality string can be copy/pasted from a file into the locality column, or the data itself can be mass loaded infile, or it can be bulk inserted – each of these methods contains a risk that unintended characters enter the table.

Even if you have time and are meticulous, you could miss certain control characters because they are invisible to the naked eye. So what are publishers - some with limited resources – going to do to ferret out these xml breaking characters? Assuming that you have access to the MySQL database itself you can identify these pesky control characters by performing a few basic steps that involves creating a small table, inserting some hexadecimal values into it (sounds much harder than it is), and finally you run the query that picks out these ‘illegal’ characters from the table that you specify.

We start out with creating a table to hold the values for the problematic characters so that we can use them in a query:

CREATE TABLE control_char (

id int(4) NOT NULL AUTO_INCREMENT,

hex_val CHAR(2),

PRIMARY KEY(id)

) DEFAULT CHARACTER SET = utf8;

The DEFAULT CHARACTER SET declaration forces UTF-8 compliance which the regular expressions used later requires.

We then populate the table with these hex values that represent control characters:

INSERT INTO control_char (hex_val)

VALUES

('00'),('01'),('02'),('03'),('04'),('05'),('06'),('07'),('08'),('09'),('0a'),('0b'),('0c'),('0d'),('0e'),('0f'),

('10'),('11'),('12'),('13'),('14'),('15'),('16'),('17'),('18'),('19'),('1a'),('1b'),('1c'),('1d'),('1e'),('1f')

;

You can read more about these values here: http://en.wikipedia.org/wiki/C0_and_C1_control_codes

At this point you may ask why the control_char table is not a temporary table as you might not want it to be a permanent feature in the database. The reason for this is sadly that MySQL has a long standing bug that prevents a temporary table from being referenced more than once; http://dev.mysql.com/doc/refman/5.0/en/temporary-table-problems.html and we have to reference it more than once as you will see later.

Now on to the main query – these declarations test the table and column that you specify against the control_char table:

SELECT t1.* FROM scinames_harvested t1, control_char

WHERE LOCATE(control_char.hex_val , HEX(t1.scientific_name)) MOD 2 != 0;

The query references two tables; one is a table of roughly 5000 records containing a record primary key, scientific_name and some other columns. Some of the scientific name strings are polluted with characters that we want to get rid of. The second table contains the control characters.

The way we ensure that the LOCATE function tests for value pairs two steps at the time is by using the modulus keyword MOD. Remember we want to look through the scientific_name char string after it has been converted to hexadecimal values (HEX) that consist of value pairs. We don’t want to test across value pairs!

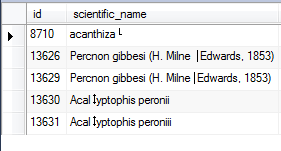

Running the query, in this instance, gives me five records with characters that are not kosher:

This is pretty neat if the alternative is eyeballing each and every record.

Note that I cannot guarantee that this will properly process every character from the UTF-8 Latin-1 supplement http://en.wikipedia.org/wiki/C1_Controls_and_Latin-1_Supplement

If you want to create a test table and try out the queries above, this UPDATE query template will change the string into something containing control characters:

UPDATE your_table SET your_column = CONCAT('Adelotus brevis', X'0B') WHERE id = 12345;In the CONCAT declaration the second part looks funny, but you have to remember that the X in front of '0B' tells MySQL that a hex value is coming. In this case it is a line-tabulation character: www.fileformat.info/info/unicode/char/000b/index.htm. This part can be edited to other values for test purposes. Naturally the CONCAT function can take n number of strings for concatenation.